AI in education: from 30 students to 30 infinite teachers

Education doesn’t stop after graduation. I realized early in my career that continuous learning is paramount because technology is always evolving. The last few years, since my own ChatGPT moment in late 2022, I’ve been focused on learning everything I can about what I believe is the most disruptive technology society has experienced in my lifetime.

I still remember seeing it over the holidays that year. I’ll credit my brother for the demo of ChatGPT and Midjourney. I was already familiar with early AI writing and image tools since we were experimenting with Jasper and Stable Diffusion at work. At the time, those tools still felt immature and the quality just wasn’t quite there.

But this felt different.

Immediately after that demo, I subscribed to ChatGPT and started experimenting. I added AI podcasts to my routine of daily walks and work commutes and began experimenting with what it could actually do in real workflows.

I became the unofficial AI correspondent at family gatherings, running informal surveys at the dinner table.

I was thinking long-term about what this means – especially when I’m considering when my nieces and nephews enter the workforce, and whether we’re truly preparing them for what’s coming.

Learning by doing

I grew up in traditional public schools. Both of my parents were educators along with several of my aunts.

Curiosity and creativity were encouraged.

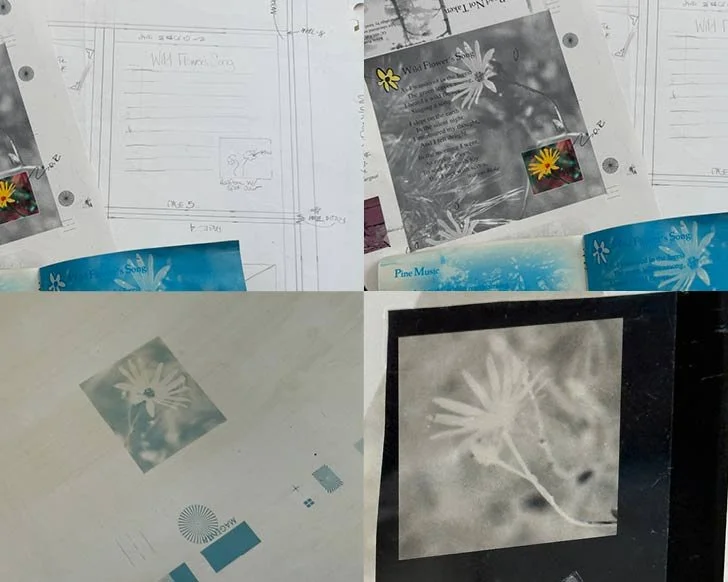

For one week in college, I considered becoming a teacher myself. Instead, I pivoted into Graphic Communications. I was initially drawn in by the creative side of it, but developed a deep appreciation for the more technical side of printing technology: how ink hits paper, how CMYK layers combine, and how presses actually run. It was a hands-on major that also included packaging science, early web design, and seemingly random courses like electricity and machining.

These were the ingredients of the process: ideas, layouts, color adjustments, proofs, press runs. You learned quickly that small inputs create big differences in results.

AI works the same way. The question is whether we’re teaching students to understand the inputs.

We gained an in-depth understanding of printing from start to finish. We concepted, designed, and physically printed our projects. We ran the presses ourselves, learning the ins and outs of screen printing, flexography, and offset lithography. At the end of the day, we cleaned the presses, and ink-stained hands were the norm.

In one memorable incident, my paper web veered off into the gears and I spent hours, late into the night, pulling shredded stock from the machinery.

It was learning by doing – the hard way.

Closer to graduation, internships reinforced that learning outside the classroom. Looking back, the training didn’t prepare me for one job; it prepared me to adapt. That model feels increasingly relevant in the age of AI.

A personal lens on disruption

Early in my career, I experienced disruption firsthand. As the industry shifted from print and analog to digital, companies downsized. Roles disappeared and entire departments were restructured. Work that once required teams of specialists could suddenly be handled by a smaller group with new tools and different skill sets. I watched how quickly technology could disrupt organizations. But I also noticed that the people who leaned in, learned the new tools, and adapted their thinking came out ahead and built entirely new careers.

That experience has stayed with me as I watch AI rapidly evolve. The difference this time, is the scale and speed of it all. The print-to-digital transition reshaped one industry, but AI is set to reshape all of them at once.

Which brings me back to education.

If we know this kind of shift is underway, how are classrooms responding?

I asked my dad recently how he sees this evolving. He spent decades teaching high school physics and chemistry, managing a classroom of thirty students at a time. His response was thoughtful and forward-looking. He said he’s imagining a future where one professor in a classroom with thirty students could eventually become one student with access to thirty infinitely patient tutors, all PhD-level experts in every field.

I once sat in my dad’s classroom as a student.

Now he talks about a future where one student might have access to thirty infinitely patient tutors – all PhD-level experts in every field.

Different era. Same responsibility to understand.

The traditional classroom model was built around scarcity, with one teacher, limited time and fixed pacing. Much of a teacher’s energy often goes toward meeting benchmarks and compliance expectations, even when those don’t always align perfectly with what might serve each individual student best.

AI changes that constraint. Personalized feedback, adaptive practice, and on-demand learning is no longer hypothetical.

At the same time, I think about my nephew Austin, who’s in college studying with the goal of becoming an orthopedic doctor.

When I asked him about how AI is being adapted at his school, he mentioned a few use-cases: Students use AI to turn lecture notes into flashcards and practice exams. NotebookLM generates podcast-style summaries for review. During his time shadowing a doctor recently, AI-assisted note systems helped with streamlining patient notes.

As I’ve talked with my other nieces and nephews (who span a wide range of ages), it’s clear that many schools are still approaching AI primarily through the lens of academic integrity. There are understandable concerns about cheating, overreliance, and erosion of critical thinking. However, restriction doesn’t feel like a sustainable long-term strategy.

Rethinking the classroom model

At the same time, some schools are experimenting with entirely different models. I recently heard about Alpha Schools on the Moonshots podcast, which profiled their approach in detail.

Alpha, based in Texas, has gained attention for compressing traditional academics into roughly two hours per day using AI tutors. Students move through core subjects at their own pace with adaptive systems providing immediate feedback. The remainder of the day is dedicated to life skills, project-based learning, and collaborative work.

It’s a radical departure from the fixed pacing most of us grew up with.

In this model, AI doesn’t replace teachers, but instead, educators (or “guides” as they’re called at Alpha) shift into coaching roles by focusing on motivation, accountability, and applied thinking. AI handles repetition and personalization.

Reactions to Alpha are mixed. Some see innovation and efficiency. Others raise concerns about screen time, access, and whether deep learning can truly be compressed. Those are all valid questions.

Given the cost of this model, I don’t know whether it will become widely adopted. But it signals a positive structural change where AI would handle repetition and personalization, freeing educators to spend more time on mentorship and higher-level guidance.

For the first time, tutoring at scale is technically possible. The constraint my dad described – one teacher, thirty students, limited hours in a day – is no longer a barrier.

Structure around the tool

Recent university research suggests that outcomes depend less on whether AI is available and more on how it’s integrated into the learning process.

In one study at Wharton, students were divided into groups with varying levels of AI access during math assignments. Some had broad, unrestricted use. Others worked within clearer guardrails. When later assessed without AI assistance, the differences were noticeable. Students who had relied heavily on unstructured AI support struggled more when the tool was removed. The ease of access had reduced the cognitive friction that normally strengthens retention and reasoning.

At Harvard, a physics course experimented with a different design. Students moved through material in a more self-paced format with AI-supported feedback built directly into the learning environment. Instead of replacing thinking, the system reinforced it by offering immediate correction, prompting explanation, and guiding problem-solving in structured ways. Students reported high engagement and demonstrated strong conceptual understanding.

This contrast is important because AI didn’t automatically weaken learning, and it didn’t automatically improve it. In both cases, the outcomes were shaped by how intentionally the tool was embedded into the curriculum. It shows that if AI becomes a shortcut, it can flatten learning, but if it becomes a scaffold, it can strengthen it.

Beyond the classroom

I’m fortunate to work in an environment with forward-thinking leadership that has encouraged responsible experimentation with AI tools from the start. We’ve had room to explore in sandboxed systems, protect intellectual property, test workflows, and share what works. Instead of treating AI as something to fear or ban outright, we treat it as a tool to understand deeply and use intentionally.

From discussions with friends in other organizations, I’m finding that not every workplace operates that way. And most classrooms don’t either (yet.)

If students graduate from systems that prohibit AI entirely, they will need to build foundational tool literacy quickly. If they graduate from systems that over-rely on it, they may need to strengthen independent reasoning and judgment.

Either way, upstream educational decisions shape downstream workforce readiness. The habits students build now (whether avoidance, dependence, or discernment) don’t disappear at graduation.

I don’t have definitive answers and I’m not looking to solve education reform in one blog post. But I’ve seen what happens when change arrives faster than organizations expect.

So I’ll probably keep asking the questions and running informal surveys at the dinner table. I’ll keep asking my nieces and nephews how they’re using AI and what their teachers are saying about it.

Sometimes paying attention early is the most practical thing you can do.